集群介绍

水平扩容:Redis集群实现了对Redis的水平扩容,即启动N个redis节点,将整个数据库分布存储在这N个节点中,每个节点存储总数的1/N。

分区存储:Redis集群通过分区来提供一定程度的可用性,即使集群中有一部分节点失效或者无法进行通讯,集群也可以继续处理命令请求。

搭建Redis集群

| IP | 端口 |

|---|---|

| 127.0.0.1 | 主:6381 从:6391 |

| 127.0.0.1 | 主:6382 从:6392 |

| 127.0.0.1 | 主:6383 从:6393 |

集群模式配置

前提:将前面章节练习中启动的redis服务停掉,持久化数据rdb,aof文件删掉

在

conf目录下制作6个实例配置文件:6381,6382,6383,6391,6392,6393redis.conf中修改基础信息如下daemonize yes dir /usr/local/redis-6.2.7/data其中:

redis_6381.conf内容如下include /usr/local/redis-6.2.7/conf/redis.conf port 6381 pidfile /usr/local/redis-6.2.7/pid/redis_6381.pid logfile "/usr/local/redis-6.2.7/logs/redis_6381.log" dbfilename dump_6381.rdb cluster-enabled yes cluster-config-file nodes-6381.conf cluster-node-timeout 15000cluster-enabled yes: 打开集群模式cluster-config-file nodes-xxxx.conf:设定节点配置文件名cluster-node-timeout 15000:设定节点失联时间,超过该时间(毫秒),集群自动主从切换快速复制

redis_6381.conf并修改其内容中的端口号cp redis_6381.conf redis_6382.conf cp redis_6381.conf redis_6383.conf cp redis_6381.conf redis_6391.conf cp redis_6381.conf redis_6392.conf cp redis_6381.conf redis_6393.conf sed -i "s/6381/6382/g" redis_6382.conf sed -i "s/6381/6383/g" redis_6383.conf sed -i "s/6381/6391/g" redis_6391.conf sed -i "s/6381/6392/g" redis_6392.conf sed -i "s/6381/6393/g" redis_6393.conf启动6个redis服务

在bin目录下执行以下命令,如:

./redis-server ../conf/redis_6381.conf ./redis-server ../conf/redis_6382.conf ./redis-server ../conf/redis_6383.conf ./redis-server ../conf/redis_6391.conf ./redis-server ../conf/redis_6392.conf ./redis-server ../conf/redis_6393.conf启动后,6个redis实例成功启动如下

[root@izwz9f92w7soch5m251ghgz bin]# ps -ef | grep redis root 21829 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6381 [cluster] root 21836 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6382 [cluster] root 21842 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6383 [cluster] root 21848 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6391 [cluster] root 21855 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6392 [cluster] root 21861 1 0 22:11 ? 00:00:00 ./redis-server 127.0.0.1:6393 [cluster] root 21868 21536 0 22:11 pts/2 00:00:00 grep --color=auto redis

将独立节点合成集群

将六个节点合成一个集群,组合之前,先确保所有redis实例启动后,nodes-xxx.conf文件都生成正常。

[root@izwz9f92w7soch5m251ghgz bin]# ll ../data/

total 24

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6381.conf

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6382.conf

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6383.conf

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6391.conf

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6392.conf

-rw-r--r-- 1 root root 114 Jul 17 22:11 nodes-6393.conf

[root@izwz9f92w7soch5m251ghgz bin]#

[root@izwz9f92w7soch5m251ghgz bin]# cat ../data/nodes-6381.conf

3b7018f57fa6a3ed9531dde066bad389bdc08d32 :0@0 myself,master - 0 0 0 connected

vars currentEpoch 0 lastVoteEpoch 0执行组合集群命令如下:

./redis-cli --cluster create --cluster-replicas 1 127.0.0.1:6381 127.0.0.1:6382 127.0.0.1:6383 127.0.0.1:6391 127.0.0.1:6392 127.0.0.1:6393确认对应主从节点配置信息,前三台为主,后三台为从,如果在提示中确认主从没有问题,输入yes即可

成功组合结果如下:

>>> Performing hash slots allocation on 6 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 127.0.0.1:6392 to 127.0.0.1:6381

Adding replica 127.0.0.1:6393 to 127.0.0.1:6382

Adding replica 127.0.0.1:6391 to 127.0.0.1:6383

>>> Trying to optimize slaves allocation for anti-affinity

[WARNING] Some slaves are in the same host as their master

M: 3b7018f57fa6a3ed9531dde066bad389bdc08d32 127.0.0.1:6381

slots:[0-5460] (5461 slots) master

M: c19fb65bd33597c5fbef576a1cc66476d129960f 127.0.0.1:6382

slots:[5461-10922] (5462 slots) master

M: 50a556ecbf6fa4a3a9fa9a165567c570faa9166c 127.0.0.1:6383

slots:[10923-16383] (5461 slots) master

S: a7853d33097e531840e8837be76868d4b312d96d 127.0.0.1:6391

replicates 3b7018f57fa6a3ed9531dde066bad389bdc08d32

S: 8d14cfcb9b13b32ce251d1099a56e3ab62c249bb 127.0.0.1:6392

replicates c19fb65bd33597c5fbef576a1cc66476d129960f

S: 0ad6fd75c0cee1fbf0d90daebcf370ef778b5864 127.0.0.1:6393

replicates 50a556ecbf6fa4a3a9fa9a165567c570faa9166c

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

.

>>> Performing Cluster Check (using node 127.0.0.1:6381)

M: 3b7018f57fa6a3ed9531dde066bad389bdc08d32 127.0.0.1:6381

slots:[0-5460] (5461 slots) master

1 additional replica(s)

M: c19fb65bd33597c5fbef576a1cc66476d129960f 127.0.0.1:6382

slots:[5461-10922] (5462 slots) master

1 additional replica(s)

S: 0ad6fd75c0cee1fbf0d90daebcf370ef778b5864 127.0.0.1:6393

slots: (0 slots) slave

replicates 50a556ecbf6fa4a3a9fa9a165567c570faa9166c

S: 8d14cfcb9b13b32ce251d1099a56e3ab62c249bb 127.0.0.1:6392

slots: (0 slots) slave

replicates c19fb65bd33597c5fbef576a1cc66476d129960f

M: 50a556ecbf6fa4a3a9fa9a165567c570faa9166c 127.0.0.1:6383

slots:[10923-16383] (5461 slots) master

1 additional replica(s)

S: a7853d33097e531840e8837be76868d4b312d96d 127.0.0.1:6391

slots: (0 slots) slave

replicates 3b7018f57fa6a3ed9531dde066bad389bdc08d32

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.查看集群信息 cluster nodes

[root@izwz9f92w7soch5m251ghgz bin]# ./redis-cli -p 6381

127.0.0.1:6381>

127.0.0.1:6381> cluster nodes

c19fb65bd33597c5fbef576a1cc66476d129960f 127.0.0.1:6382@16382 master - 0 1658070459000 2 connected 5461-10922

0ad6fd75c0cee1fbf0d90daebcf370ef778b5864 127.0.0.1:6393@16393 slave 50a556ecbf6fa4a3a9fa9a165567c570faa9166c 0 1658070458000 3 connected

8d14cfcb9b13b32ce251d1099a56e3ab62c249bb 127.0.0.1:6392@16392 slave c19fb65bd33597c5fbef576a1cc66476d129960f 0 1658070460000 2 connected

50a556ecbf6fa4a3a9fa9a165567c570faa9166c 127.0.0.1:6383@16383 master - 0 1658070461306 3 connected 10923-16383

a7853d33097e531840e8837be76868d4b312d96d 127.0.0.1:6391@16391 slave 3b7018f57fa6a3ed9531dde066bad389bdc08d32 0 1658070461000 1 connected

3b7018f57fa6a3ed9531dde066bad389bdc08d32 127.0.0.1:6381@16381 myself,master - 0 1658070457000 1 connected 0-5460

127.0.0.1:6381>

127.0.0.1:6381> cluster info

cluster_state:ok

cluster_slots_assigned:16384

cluster_slots_ok:16384

cluster_slots_pfail:0

cluster_slots_fail:0

cluster_known_nodes:6

cluster_size:3

cluster_current_epoch:6

cluster_my_epoch:2

cluster_stats_messages_ping_sent:3102

cluster_stats_messages_pong_sent:3208

cluster_stats_messages_meet_sent:1

cluster_stats_messages_sent:6311

cluster_stats_messages_ping_received:3208

cluster_stats_messages_pong_received:3103

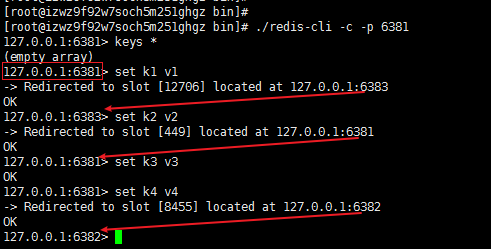

cluster_stats_messages_received:6311采用-c 集群策略连接集群

./redis-cli -c -p 6381

”无中心化集群”:在任何一个写节点写入数据,如果对应key计算后不在本节点,会自动切换到相应的写节点。

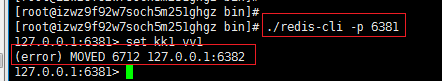

注意:如果使用普通方式登录,存储数据时,会出现MOVED重定向错误,如下:

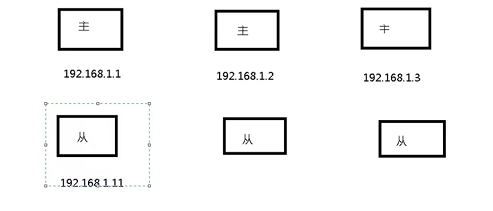

redis集群节点分配原则

一个集群至少要有三个主节点

选项 --cluster-replicas 1 表示我们希望为集群中的每个主节点创建一个从节点。

为了达到高可用,分配原则:

- 尽量保证每个主数据库运行在不同的主机上

- 每个从库和主库不在同一个主机上

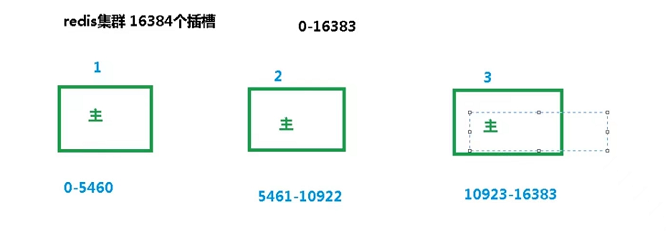

slots插槽介绍

Redis集群通过分片的方式来保存数据中的键值对:集群中的数据库被分为16384个槽(slot)

集群使用公式

CRC16(key)%16384来计算键key属于哪个插槽,然后将其存储到对应主机插槽中。

根据前面合成集群日志,如下:

M: 3b7018f57fa6a3ed9531dde066bad389bdc08d32 127.0.0.1:6381

slots:[0-5460] (5461 slots) master

1 additional replica(s)

M: c19fb65bd33597c5fbef576a1cc66476d129960f 127.0.0.1:6382

slots:[5461-10922] (5462 slots) master

1 additional replica(s)

M: 50a556ecbf6fa4a3a9fa9a165567c570faa9166c 127.0.0.1:6383

slots:[10923-16383] (5461 slots) master

1 additional replica(s)

集群中的每个节点负责处理一部分插槽,如上例子所示,节点(6381)负责处理0-5460号插槽。

使用

redis-cli -c -p 6380登入后,每次录入,查询键值,redis都会计算出该key应该送往的插槽,如果不是该客户端对应服务器的插槽,会重定向到相应的服务器。

- 不在一个slot下的键值,是不能使用mget,mset等多键操作的,如下:

[root@izwz9f92w7soch5m251ghgz bin]# ./redis-cli -c -p 6381

127.0.0.1:6381>

127.0.0.1:6381> mset name lucy age 18 sex girl

(error) CROSSSLOT Keys in request don't hash to the same slot

127.0.0.1:6381> - 可以通过{}来定义组的概念,从而使相同组的键值放到一个slot中,如下

[root@izwz9f92w7soch5m251ghgz bin]# ./redis-cli -c -p 6381

127.0.0.1:6381>

127.0.0.1:6381> mset name{user} lucy age{user} 18 sex{user} girl

-> Redirected to slot [5474] located at 127.0.0.1:6382

OK

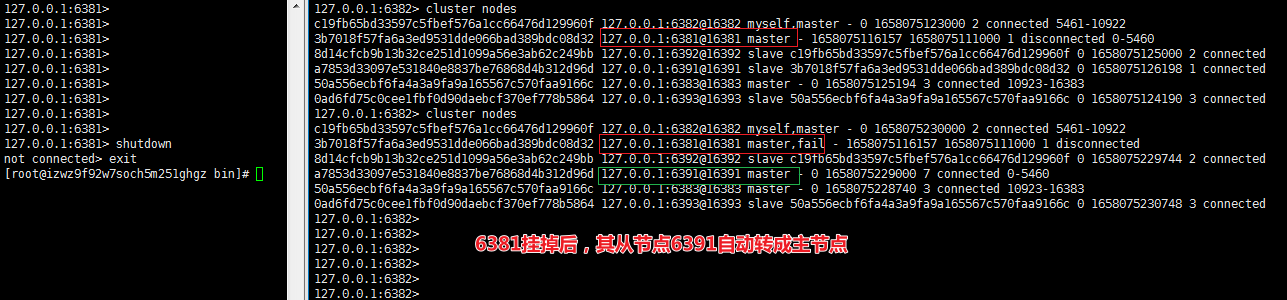

127.0.0.1:6382> 故障恢复

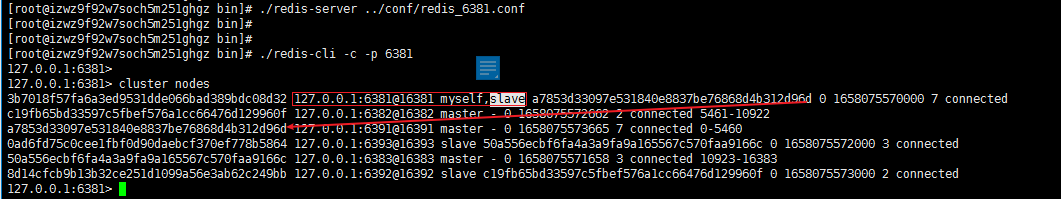

如果主节点下线,从节点能否自动升为主节点?–能

主节点恢复后,主从关系会如何?–主节点恢复后变成从机

如果所有某一段插槽的主从节点都挡掉,redis服务是否还能继续?

- 如果

cluster-require-full-coverage为yes,那么整个集群都挂掉 - 如果

cluster-require-full-coverage为no,那么该插槽数据不能使用,其他插槽依然可以使用。

- 如果

转载请注明来源,欢迎对文章中的引用来源进行考证,欢迎指出任何有错误或不够清晰的表达。